Announcing Icechunk 2: Better Consistency, Performance, and Reliability for Tensor Storage

CEO & Co-founder

Staff Engineer

When we released Icechunk 1.0 last July, we declared it production-ready and committed to format stability. Since then, adoption has exceeded our expectations. Teams across weather forecasting, climate science, neuroscience, and AI/ML have pushed Icechunk into scenarios we didn’t fully anticipate—repositories with tens of thousands of commits, hierarchies with 100k+ arrays, and multi-terabyte distributed write pipelines running 24/7. All of this real-world usage has given us an incredible window into what works well and what needs to evolve.

Today, we’re excited to announce the general availability of Icechunk 2, a major release that delivers fundamental improvements to consistency, performance, and reliability—while also introducing powerful new capabilities for managing tensor data at scale.

Seba gave a preview of what was coming back in December. Now it’s here. Let’s dig into what’s new.

Before we dive in: if you have existing Icechunk 1 repositories, don’t worry. Icechunk 2 fully reads and writes V1 repos, and when you’re ready, migration is a quick metadata-only operation—no chunk data is copied or rewritten. We’ve designed this release so you can adopt it at your own pace. More details in the migration section below.

A new foundation for consistency

The single biggest architectural change in Icechunk 2 is the unified repo info file. Think of this as a “table of contents” of the Repository. In V1, refs (branches and tags) and repository config were stored as independent objects in storage. Commits against a single branch have always been safe, but other concurrent operations—branch/tag creation/deletion, garbage collection, config changes—could still race against each other, and there was no way to get a consistent, atomic view of the full repository state.

Icechunk 2 fixes this at the foundation. All repository state is now referenced from a single unified object:

- The full snapshot ancestry—parents, timestamps, commit messages—lives in one place. No more sequential snapshot reads to reconstruct history.

- All branches, tags, and deleted tags.

- Repository status, metadata, config, and feature flags.

- An operations log: an immutable audit trail of every mutation to the repo. Every commit, amend, tag/branch create/delete, GC run, config change, and migration is recorded with a timestamp and a backup pointer to the previous repo info file. (See Operations Log.)

Every mutation to this unified state is applied using optimistic concurrency control with automatic retry and backoff on conflict. This is a much stronger consistency model than V1, and it means that operations like garbage collection, branch updates, and config changes are all serializable with respect to each other. The single top-level entry point file also makes it much quicker and simpler to ascertain the overall state of a repo from a single read operation.

Powerful new features

Move, shift, and reindex

One of the most common pain points we heard from Zarr and Icechunk users was the inability to reorganize their data hierarchy without expensive chunk rewrites. Need to move an array from one group to another? Fix a typo in an array name? In V1, that meant reading and rewriting every single chunk. 😝

In Icechunk 2, move_node(from, to) is a cheap metadata-only operation. No chunks are copied or rewritten. Similarly, shift_array(path, offset) shifts all chunks by a chunk offset, and reindex_array(path, fn) allows arbitrary chunk coordinate transformations via a user-provided function—all without touching data.

We’ve been dogfooding shifting with our design partner Brightband; its perfect for maintaining the “rolling forecast data cubes” they use for real-time data in the Earthmover Data Marketplace, where the cube contains the most recent 15 days of forecasts.

Rectilinear chunk grids

Icechunk 1 only supported regular chunk grids where every chunk has the same size. Icechunk 2 adds support for Zarr 3’s rectilinear (variable-sized) chunk grids, where each chunk along a dimension can have a different size. This is critical for applications like building virtual datasets from unevenly partitioned NetCDF files or appending data with irregular time intervals (like months). Combined with the move/shift operations above, this also makes prepends and inserts easy—something that’s never been possible with native Zarr, where only appends are straightforward. The Icechunk format only keeps track of the number of chunks along an axis—the sizes are left to the Zarr library. We are now able to represent any complicated chunk grid that Zarr users may invent.

Amend and anonymous snapshots

Many users want Icechunk’s transactional guarantees without accumulating long commit histories. The new amend() operation replaces the previous commit on a branch instead of creating a new one. And session.flush(message) creates a detached anonymous snapshot without advancing the branch—useful for checkpointing during long-running pipelines. Both are first-class citizens tracked in the repo info and operations log.

Repository status and feature flags

Repos can now be marked as Online or ReadOnly (with a reason), protecting against accidental writes. Per-repo feature flags allow toggling specific operations like move_node, create_tag, or delete_tag, giving administrators fine-grained control.

Repository-level metadata

V1 supported arbitrary metadata on commits. V2 extends this to the repository itself—key-value metadata that lives at the repo level and can be updated and retrieved independently of any snapshot.

HTTP storage and redirect storage

Icechunk repos can now be served read-only over HTTP/HTTPS, enabling public or CDN-hosted repositories without requiring object store credentials. A companion RedirectStorage backend follows HTTP 302 redirects to resolve the actual storage location (S3, R2, Tigris, GCS), enabling flexible CDN and load-balancing architectures.

This feature is important for partners like Dynamical.org who want to serve Icechunk repos from a stable HTTP URL while retaining flexibility to move the underlying storage location across regions or cloud providers.

More ergonomic distributed writes

We’ve streamlined the distributed write workflow. While Icechunk V1 supports distributed writes, this capability came with some significant restrictions; specifically, session forking could only happen on clean sessions. This emerged as a real pain point for users, who were used to being able to make some changes in a parent session (e.g. resizing an array) before kicking off a bunch of child workers to write the data.

Icechunk no longer has this limitation. ForkSession is now based off an anonymous snapshot that captures uncommitted state, allows writes but disallows commits, and is fully serializable (picklable) for distribution to workers. Fork sessions are merged back via session.merge(fork_session) as before.

Pretty reprs and introspection

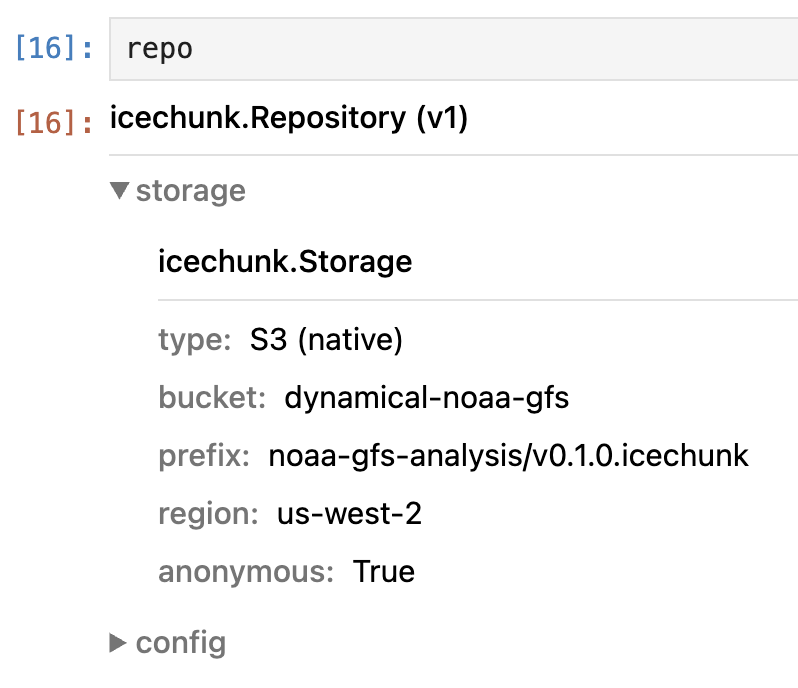

We’ve overhauled the Python developer experience. All Python classes now define complete string, executable, and HTML reprs, so you can inspect repository state directly in a notebook. For example, here’s the HTML repr for a repository object:

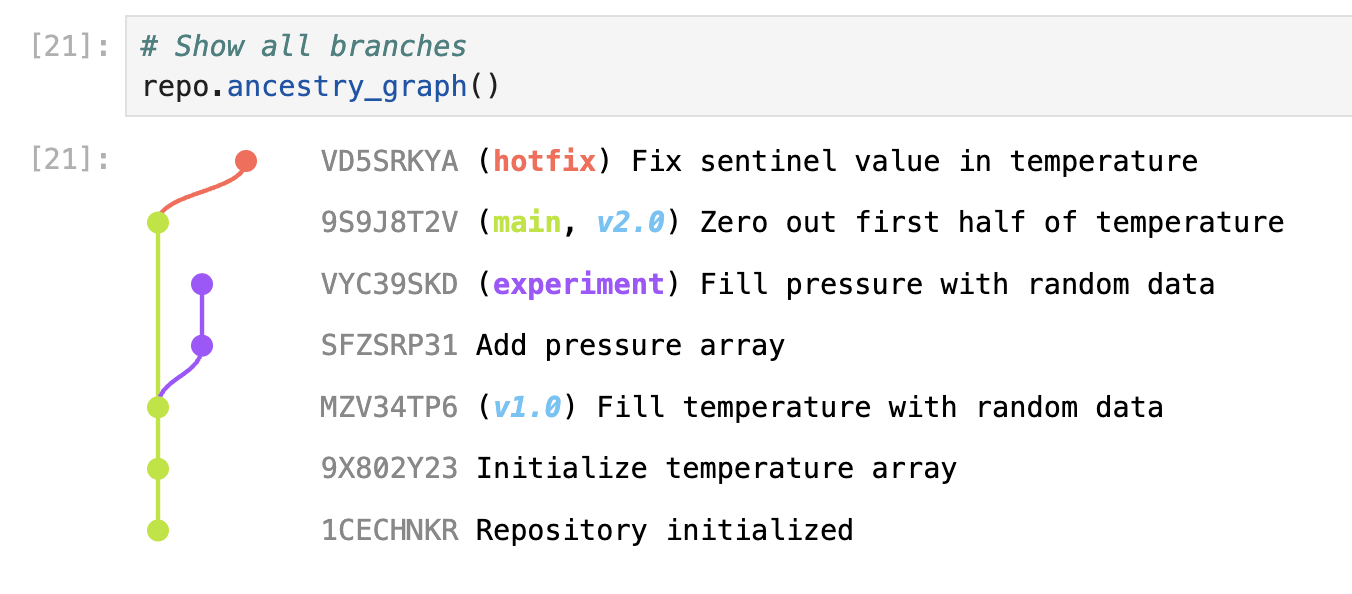

And repo.ancestry_graph() renders a visual commit graph, making it easy to understand branching and tagging history:

New inspect_snapshot(), inspect_repo_info(), and inspect_manifest() methods return detailed JSON introspection of internal structures, useful for debugging and understanding exactly what’s on disk.

Performance improvements

The unified repo info file is not just about consistency—it’s a major performance win. Because all snapshot ancestry now lives in a single file, ancestry lookups are O(1) instead of requiring sequential reads across many objects. Expiration and garbage collection are dramatically faster on repos with long histories.

Beyond that:

- Parallel flush: array nodes are flushed in parallel during commit, significantly speeding up commits with many arrays.

- Concurrent transaction log fetching: transaction logs are fetched concurrently during rebase, speeding up conflict detection.

- NodeSnapshot cache: frequently accessed nodes are cached on the Snapshot, reducing deserialization overhead.

- Zstd dictionary compression for virtual chunk URLs: the often-repetitive S3 paths in virtual chunk manifests are automatically compressed using a trained zstd dictionary, reducing manifest sizes substantially.

Reliability and storage resilience

We’ve hardened Icechunk’s interaction with cloud storage in meaningful ways:

- Expanded retries on HTTP 408 (timeout), 429 (rate limit), 499 (client closed), plus connection resets and stalled stream detection. Configurable

max_tries, backoff, and per-operation timeouts. - Timeout settings wired through to the S3 client: connect, read, operation, and attempt timeouts, with stalled stream protection and a configurable grace period.

- GC consistency: garbage collection now handles concurrent repo modifications via retry with exponential backoff. It detects and reprocesses when new snapshots appear mid-collection, and properly rewrites parent pointers when deleting snapshot chains.

- User agent headers: all requests to the object store include

icechunk-rust-<version>for tracing and debugging.

Rigorous testing

We’ve invested heavily in testing to match the ambition of this release:

- Our existing suite of stateful and property tests were extended with move operations, two-version compatibility testing (using

third-wheel!), GC/expiration invariants, and upgrade scenarios. - We took a page out of the AWS S3 teams’ book and wired up the

shuttlecrate for concurrency permutation testing. This approach explores randomized interleavings of concurrent operations and helped us catch race conditions during development. - Fault injection testing with

toxiproxymeans that Icechunk 2 is much more robust to flaky network conditions. This proxy simulates connection resets, stalls, and other network failures to validate retry logic under realistic conditions. - Icechunk now includes a nascent suite of

criterionmicrobenchmarks for snapshot serialization, manifest writing, and asset manager operations. This helped us speed up many common workloads that involve a large number of virtual references. - Finally, the entire unit test suite is parameterized over both V1 and V2 formats to ensure that we break nothing.

WASM support

Icechunk core now compiles to WASM with a single-threaded tokio runtime, opening the door to browser and Node.js usage. This was made possible by a major simplification of the storage layer in Icechunk 2—storage instances are now much simpler and more composable, which enabled the new HTTP, redirect, and WASM storage backends without adding complexity. We’re excited about the possibilities for interactive data exploration directly in the browser and potential integration with tools like Browzarr and deck.gl raster.

Battle-tested in production

Icechunk 2 isn’t just new—it’s already proven. The V2 format has been running in Arraylake’s production servers for weeks, handling real customer workloads. This extended burn-in period gave us confidence that the new consistency model, storage resilience improvements, and performance optimizations hold up under sustained, real-world usage.

The migration path

We understand the pain that format migrations can cause, and we’ve designed Icechunk 2 to minimize disruption.

Icechunk 2 fully interoperates with Icechunk 1. The Icechunk 2 library continues to support reading, writing, and managing V1 repositories. You don’t have to migrate anything if you don’t want to.

When you’re ready, migrate_1_to_2() (with dry-run support) handles the upgrade. The migration only creates / modifies metadata files. No chunk data is duplicated or rewritten, and the process is safe under the presence of failure or disconnects. We also have support in the Earthmover platform to run migrations for you.

The key thing to remember: once a repo is migrated to V2 format (or created as V2), it can’t be opened by the older Icechunk 1.x library. Update all your pipelines to the Icechunk 2 library before migrating.

Python API changes

Despite the scope of the internal changes, the Python API is almost entirely backward compatible—most users won’t need to change a single line of code. A couple of minor changes to be aware of:

- Enum variants are now

snake_case(e.g.,SessionMode.writableinstead ofSessionMode.Writable). manifest_files()has been renamed tolist_manifest_files().

Other improvements:

- All Python classes now define complete string, executable, and HTML reprs.

- Initial support for free-threading is included. This feature is lightly tested, and we welcome contributions to help make it robust.

Get started

Icechunk 2 is available today:

pip install icechunkHead over to icechunk.io for documentation and examples, or dive into the GitHub repository. We welcome your issues and pull requests.

If you’re an Earthmover platform customer, Icechunk 2 support is already available in Arraylake. Reach out to your account team to discuss the upgrade path for your data.

Icechunk 2 is the result of deep collaboration between the Earthmover engineering team and our growing open-source community. Thanks to everyone who filed issues, tested alpha builds, and pushed Icechunk into unexpected territory. You made this release better.

CEO & Co-founder

Staff Engineer